Jensen Huang: $1 Trillion Won't Meet AI Demand

Jensen Huang Says $1 Trillion in AI Infrastructure Orders Is Just the Beginning

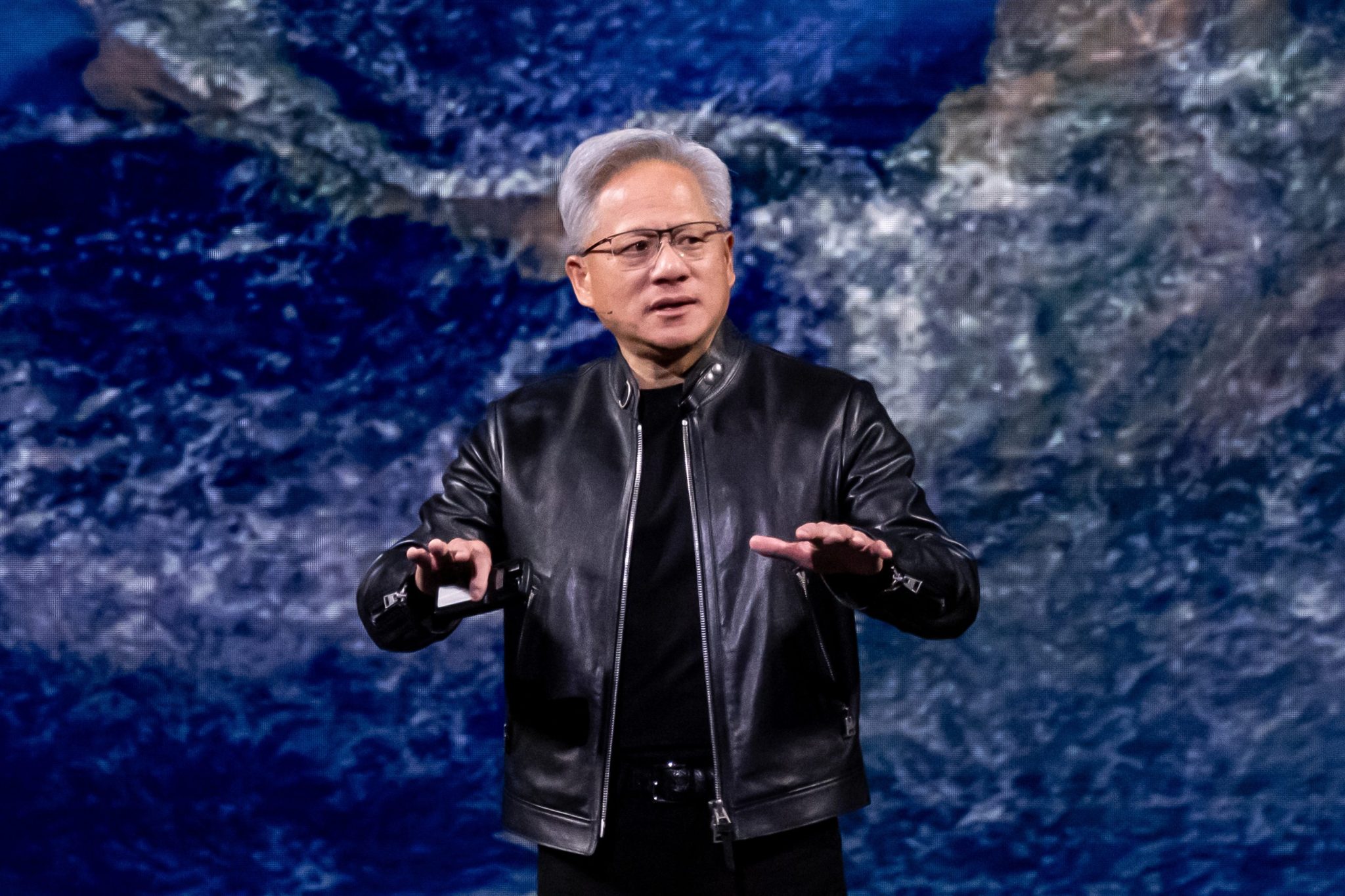

On March 16, 2026, Nvidia CEO Jensen Huang took the stage at the SAP Center in San Jose, California, before more than 30,000 attendees from 190 countries, and delivered a message that reframed the scale of the current AI buildout: a $1 trillion projection in committed purchase orders for Nvidia's Blackwell and Vera Rubin chip architectures through 2027 — and he called it deliberately conservative.

"I see through 2027 at least $1 trillion. In fact, we are going to be short," Huang told the GTC 2026 audience. During the subsequent Q&A, he went further: "$1 trillion is only Blackwell plus Rubin, only through 2027, and it is likely to be larger than what I'm showing."

That $1 trillion figure is already double the $500 billion projection Huang made at the same event a year earlier — a doubling that tracks with Nvidia's own financial performance. The company posted record full-year revenue of $215.9 billion for fiscal 2026, up 65% from the prior year, with data center revenue alone reaching $197.3 billion for the full year and $62.3 billion in Q4, up 75% year-over-year.

"The Largest Buildout of Human History"

Huang's framing at GTC 2026 was nothing short of sweeping. "We are completely resetting and starting the largest buildout of human history," he declared — a claim he had previewed months earlier at the World Economic Forum in Davos, Switzerland, where he said on January 21, 2026, that "there are trillions of dollars of infrastructure that needs to be built out" and that the world was only "a few 100 billion dollars into it" at that point.

The numbers behind the buildout are starting to support the scale of that language. According to CNBC, combined capital expenditure from the four major hyperscalers — Alphabet, Amazon, Meta, and Microsoft — could approach $700 billion in 2026, based on their quarterly forecasts. Nvidia CFO Colette Kress stated that the company believes total AI infrastructure investment could reach $3 trillion to $4 trillion annually by 2029 or 2030.

Nvidia's own infrastructure footprint reflects this momentum. According to the company's official blog, Nvidia Cloud Partners cumulatively deployed more than 1 million Nvidia GPUs in AI factories globally by GTC 2026, representing more than 1.7 gigawatts of AI capacity — more than double the approximately 400,000 GPUs and 550 megawatts reported at GTC 2025.

Huang described AI as a "five-layer cake" requiring energy, chips, cloud infrastructure, models, and applications — all of which must scale together — with Nvidia positioned at the center of the stack. "Finally, AI is able to do productive work, and therefore the inflection point of inference has arrived," he said at GTC 2026.

Vera Rubin, Groq 3, and the Next Generation of AI Hardware

The infrastructure ambitions Huang outlined are anchored in a hardware roadmap that Nvidia used GTC 2026 to lay out in significant detail.

The headline product announcement was the Vera Rubin AI platform — a full-stack system comprising seven co-designed chips and five rack-scale configurations — as the successor to the current Blackwell generation, with datacenter shipments expected in the second half of 2026. The flagship NVL72 configuration packs 72 Rubin GPUs and 36 Vera CPUs, delivering 3.6 exaFLOPS of NVFP4 inference performance. Nvidia claims the platform offers 10x lower inference cost per token than Blackwell. Each Rubin GPU carries 336 billion transistors, features 288GB of HBM4 memory delivering 22 TB/s of bandwidth, and 50 petaflops of FP4 inference performance per chip.

"Rubin arrives at exactly the right moment, as AI computing demand for both training and inference is going through the roof," Huang said.

Nvidia also unveiled the Nvidia Groq 3 Language Processing Unit (LPU) at GTC 2026 — the first chip to emerge from Nvidia's $20 billion acquisition of Groq assets completed in December 2025, with shipments expected in Q3 2026. Looking further ahead, Huang previewed the Feynman architecture, targeted for 2028 on TSMC's 1.6nm process.

AI Tokens as Engineer Compensation: A New Recruiting Tool

Alongside the hardware announcements, one of the most widely discussed elements of GTC 2026 — and a subsequent appearance on the All-In Podcast recorded on the final day of the conference — was Huang's proposal to restructure how engineers are compensated, adding AI token budgets as a fourth component of tech pay alongside salary, bonus, and equity.

The argument, as Huang made it, is rooted in productivity math. According to Tom's Hardware, Huang said on the All-In Podcast that he would be "deeply alarmed" if an engineer paid $500,000 a year did not consume at least $250,000 worth of AI tokens to get their job done. The logic: an engineer not using AI at that level is leaving compounding leverage on the table.

"I'm going to give them probably half of that on top of it as tokens so that they could be amplified 10X. Of course, we would," Huang said, as reported by Yahoo Finance.

He extended that thinking to Nvidia's entire engineering organization: "I could totally imagine in the future every single engineer in our company will need an annual token budget." And he predicted the trend would spread across Silicon Valley: "It is now one of the recruiting tools in Silicon Valley: how many tokens come along with my job."

That observation appears to already be playing out. According to Yahoo Finance and multiple sources, Thibault Sottiaux, engineering lead at OpenAI's Codex, publicly noted on X that candidates are asking about compute access in interviews — corroborating Huang's read on the shifting priorities of technical talent.

When asked on the All-In Podcast whether Nvidia is currently spending around $2 billion a year on tokens for its engineering team, Huang's answer was unambiguous: "We're trying to."

42,000 Humans, Hundreds of Thousands of Digital Employees

The token compensation pitch is inseparable from a broader argument Huang made at GTC 2026 about the future composition of the workforce. "I have 42,000 biological employees, and I'm going to have hundreds of thousands of digital employees," he told CNBC — referring to AI agents he expects will operate alongside human engineers.

That framing matters for how the token budget proposal should be read. Huang is not simply suggesting that AI tools are useful productivity aids. He is arguing that the boundary between human and AI labor is collapsing fast enough that the token consumption of a human engineer — their ability to direct and amplify AI agents — becomes a measurable proxy for their output. In that model, a token budget is not a perk. It is infrastructure.

Why This Matters: Context and Industry Implications

The scale of what Huang described at GTC 2026 has implications that extend well beyond Nvidia's own balance sheet, impressive as those numbers are. The $1 trillion purchase order figure — conservative, by Huang's own account — represents a fundamental reallocation of global capital toward AI infrastructure at a speed and scale that has no clear historical precedent.

The hyperscaler spending data provides independent confirmation of the direction, if not the full magnitude, of the trend. Combined 2026 capex from Alphabet, Amazon, Meta, and Microsoft could approach $700 billion. Nvidia's own CFO has projected that annual global AI infrastructure investment could reach $3 to $4 trillion by the end of the decade. Goldman Sachs, according to CNBC, estimates AI could potentially automate tasks accounting for 25% of all work hours in the U.S.

The token compensation story is a narrower but telling signal of how that infrastructure investment is beginning to reshape daily work. If engineers at companies like Nvidia are being given token budgets worth half their base salary — and if candidates are already negotiating compute access as part of job offers — then the economics of knowledge work are shifting in real time. The question of how much AI a worker uses is becoming, in some corners of the industry, as consequential as the question of how skilled that worker is in the first place.

Huang's Davos comments in January 2026 set up the GTC announcement with a useful frame: the world is early in this buildout, not late. "There are trillions of dollars of infrastructure that needs to be built out," he said then. The $1 trillion in purchase orders announced six weeks later, described as a floor rather than a ceiling, is the most concrete evidence yet that the spending is moving to match the ambition.

What's Next

In the near term, the key milestones to watch are the rollout of Vera Rubin datacenter systems in the second half of 2026, Groq 3 LPU shipments expected in Q3 2026, and whether hyperscaler capex continues tracking toward the $700 billion combined figure forecasted for this year. Nvidia's next earnings cycle will provide an early read on whether the $1 trillion purchase order backlog is translating into revenue at the pace the company projects.

On the workforce side, it remains to be seen how quickly the token compensation model Huang described spreads beyond Nvidia to the broader technology industry. The early signal from OpenAI's Codex team — candidates asking about compute access in interviews — suggests the conversation is already moving. Whether token budgets become a standard line item in tech compensation packages, or remain a practice specific to companies at the frontier of AI deployment, will likely depend on how rapidly AI agents prove their productivity value in day-to-day engineering work.

Huang's longer-range roadmap — including the Feynman architecture targeted for 2028 — and the CFO's projection of $3 to $4 trillion in annual AI infrastructure investment by 2029 or 2030 suggest Nvidia is planning for a demand curve that does not flatten anytime soon.

For more tech news, visit our news section.

What This Means for Your Productivity

The shift Jensen Huang is describing — where access to AI compute becomes as important as salary in evaluating a job, and where token consumption is a measurable signal of output — is a preview of how AI will reshape the economics of knowledge work for professionals across every field, not just chip engineers. Understanding how to use AI tools effectively, and how to integrate them into daily workflows, is becoming a core competency rather than a nice-to-have. Moccet is built to help you do exactly that — tracking the intersection of technology, productivity, and human performance so you can make smarter decisions about how you work. Join the Moccet waitlist to stay ahead of the curve.