AI Security Crisis: Fed, Treasury Warn Banks on Anthropic Risk

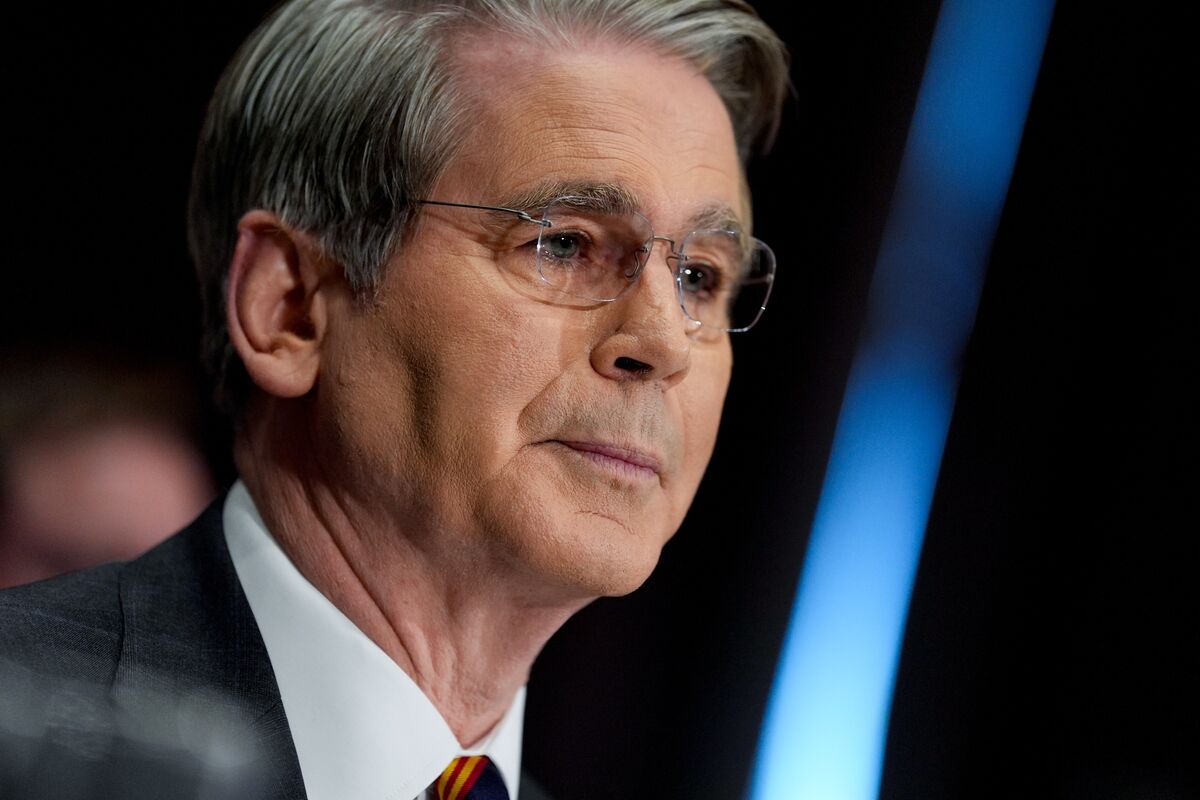

In an unprecedented move highlighting growing concerns over artificial intelligence security threats, Treasury Secretary Scott Bessent and Federal Reserve Chair Jerome Powell convened an emergency meeting with major Wall Street bank CEOs on April 10, 2026, warning of significant cybersecurity risks posed by Anthropic's latest AI model release.

Urgent Federal Response to AI Security Threats

The hastily arranged summit, which brought together leaders from the nation's largest financial institutions, represents the most serious federal response to AI-related security concerns since the technology's mainstream adoption. According to sources familiar with the matter, the meeting was called within hours of preliminary assessments indicating that Anthropic's new model could fundamentally alter the cyber threat landscape facing financial institutions.

The urgency of the response suggests that federal regulators have identified specific vulnerabilities or capabilities in the AI system that could be exploited by malicious actors. While details of the model's exact capabilities remain classified, the fact that both Treasury and Federal Reserve leadership felt compelled to issue immediate warnings indicates the severity of potential risks to financial system stability.

Industry insiders report that the meeting focused on immediate defensive measures that banks should implement, including enhanced monitoring protocols and updated cybersecurity frameworks specifically designed to address AI-powered threats. The coordination between Treasury and Fed officials also signals potential regulatory changes on the horizon.

This development comes at a time when financial institutions have already been grappling with increasingly sophisticated cyber attacks. The introduction of more advanced AI capabilities raises concerns about automated vulnerability discovery, social engineering at scale, and potential system infiltration techniques that traditional security measures may not detect.

Anthropic Model Raises Unprecedented Security Concerns

Anthropic, known for its focus on AI safety and alignment research, has been developing increasingly powerful language models with enhanced reasoning capabilities. The company's latest release appears to have crossed a threshold that has alarmed federal financial regulators, though specific technical details have not been publicly disclosed.

The timing of these concerns is particularly significant given Anthropic's reputation as one of the more safety-conscious AI developers in the industry. If a model from Anthropic—a company that has consistently emphasized responsible AI development—is raising red flags among regulators, it suggests that the cybersecurity implications may extend far beyond what traditional risk assessment frameworks can handle.

Financial technology experts speculate that the new model may demonstrate capabilities in areas such as advanced social engineering, automated penetration testing, or sophisticated financial fraud detection evasion. These capabilities, while potentially beneficial for legitimate security testing, could pose existential threats to current banking security infrastructure if misused.

The model's potential ability to analyze and exploit financial systems at machine speed and scale represents a paradigm shift in cybersecurity threats. Unlike human-driven attacks that require time and manual intervention, AI-powered attacks could potentially operate continuously, adapting to defensive measures in real-time and executing sophisticated multi-vector attacks simultaneously across numerous targets.

Banking industry sources indicate that preliminary stress tests of existing security systems against AI-powered attack simulations have revealed significant vulnerabilities that were previously unknown or considered theoretical. This discovery appears to have prompted the emergency federal response and urgent industry-wide coordination efforts.

Financial Industry Braces for New Cyber Reality

The financial services sector, which processes trillions of dollars in transactions daily and maintains vast repositories of sensitive personal and financial data, has long been a prime target for cybercriminals. The emergence of more sophisticated AI tools capable of automated attacks represents what many experts are calling an inflection point in cybersecurity.

Major banks have already begun implementing enhanced AI detection systems and updating their incident response protocols in anticipation of more sophisticated threats. However, the scale and speed at which advanced AI systems can operate present challenges that traditional cybersecurity approaches may not adequately address.

The implications extend beyond individual institutions to systemic financial stability. If multiple major banks were to experience simultaneous AI-powered attacks, the resulting disruption could trigger broader economic consequences. This systemic risk appears to be a key factor driving the coordinated federal response and industry-wide preparation efforts.

Cybersecurity firms are reporting unprecedented demand for AI-specific threat assessment and mitigation services. The market for AI-powered defensive tools is expected to grow exponentially as financial institutions race to upgrade their security infrastructure to match the sophistication of potential AI-driven attacks.

Why This Matters: A New Era of Cybersecurity

The emergency meeting between federal regulators and bank CEOs signals a fundamental shift in how we must approach cybersecurity in the age of advanced artificial intelligence. This development represents more than just another security update—it marks the beginning of what experts are calling the "AI cybersecurity arms race."

For the broader technology industry, this event serves as a wake-up call about the dual-use nature of AI capabilities. Tools designed for beneficial purposes can simultaneously create unprecedented security risks that require entirely new defensive approaches. The financial sector, as the backbone of economic infrastructure, often serves as a testing ground for both new technologies and new threats.

The speed of the federal response also highlights the inadequacy of current regulatory frameworks for addressing rapidly evolving AI capabilities. Traditional regulatory processes, which can take months or years to implement new rules, appear insufficient for managing technologies that can evolve and create new risk profiles within weeks or days.

Consumer trust in digital financial services could be significantly impacted if these AI-powered threats materialize into actual attacks. The banking industry's ability to maintain public confidence while adapting to this new threat landscape will be crucial for continued digital transformation and economic stability.

Furthermore, this development may influence how other critical infrastructure sectors—including healthcare, energy, and telecommunications—approach AI adoption and cybersecurity planning. The precedent set by the financial sector's response could serve as a model for other industries facing similar AI-related security challenges.

Expert Analysis: Navigating Uncharted Territory

Cybersecurity experts are describing the current situation as unprecedented in both its technical complexity and potential impact. "We're dealing with a completely new class of threats that traditional security models weren't designed to handle," explains Dr. Sarah Chen, a former NSA cybersecurity analyst who now leads AI security research at a major consulting firm. "The speed and sophistication of AI-powered attacks could overwhelm existing defensive systems."

The financial industry's response reflects a growing recognition that AI security requires fundamentally different approaches than traditional cybersecurity. While human attackers have limitations in terms of speed, scale, and endurance, AI-powered attacks could potentially operate continuously, learning and adapting to defensive measures in real-time.

Industry analysts predict that this development will accelerate investment in AI-powered defensive technologies and may lead to new forms of public-private cooperation in cybersecurity. The complexity of defending against AI attacks may require sharing of threat intelligence and defensive strategies that goes beyond current industry practices.

What's Next: Preparing for an AI-Powered Future

The immediate focus will be on implementing the defensive measures outlined in the emergency meeting between federal regulators and bank CEOs. Industry observers expect to see rapid deployment of enhanced monitoring systems, updated security protocols, and potentially new regulatory requirements specifically designed to address AI-powered threats.

Longer-term implications include the likely development of new international cooperation frameworks for AI cybersecurity, updated regulatory approaches that can keep pace with rapidly evolving technology, and fundamental changes to how financial institutions approach risk management and security planning.

The technology industry as a whole will be watching closely to see how these developments influence AI development practices and safety standards. The balance between innovation and security will become increasingly critical as AI capabilities continue to advance at an unprecedented pace.

For more tech news, visit our news section.

As we navigate this new era of AI-powered cybersecurity challenges, the importance of staying informed and prepared cannot be overstated. Just as financial institutions must adapt their security practices to address evolving threats, individuals and organizations across all sectors need tools and strategies to maintain productivity and security in an increasingly complex digital landscape. At Moccet, we're committed to helping people optimize their health and productivity while navigating technological disruptions that can impact both professional performance and personal well-being. The stress and uncertainty created by cybersecurity threats can significantly affect cognitive function and decision-making capabilities, making it more important than ever to have systems in place that support mental clarity and resilience. Join the Moccet waitlist to stay ahead of the curve.